MegaSeats: A/B Testing “Hot Deals” to Improve Conversion

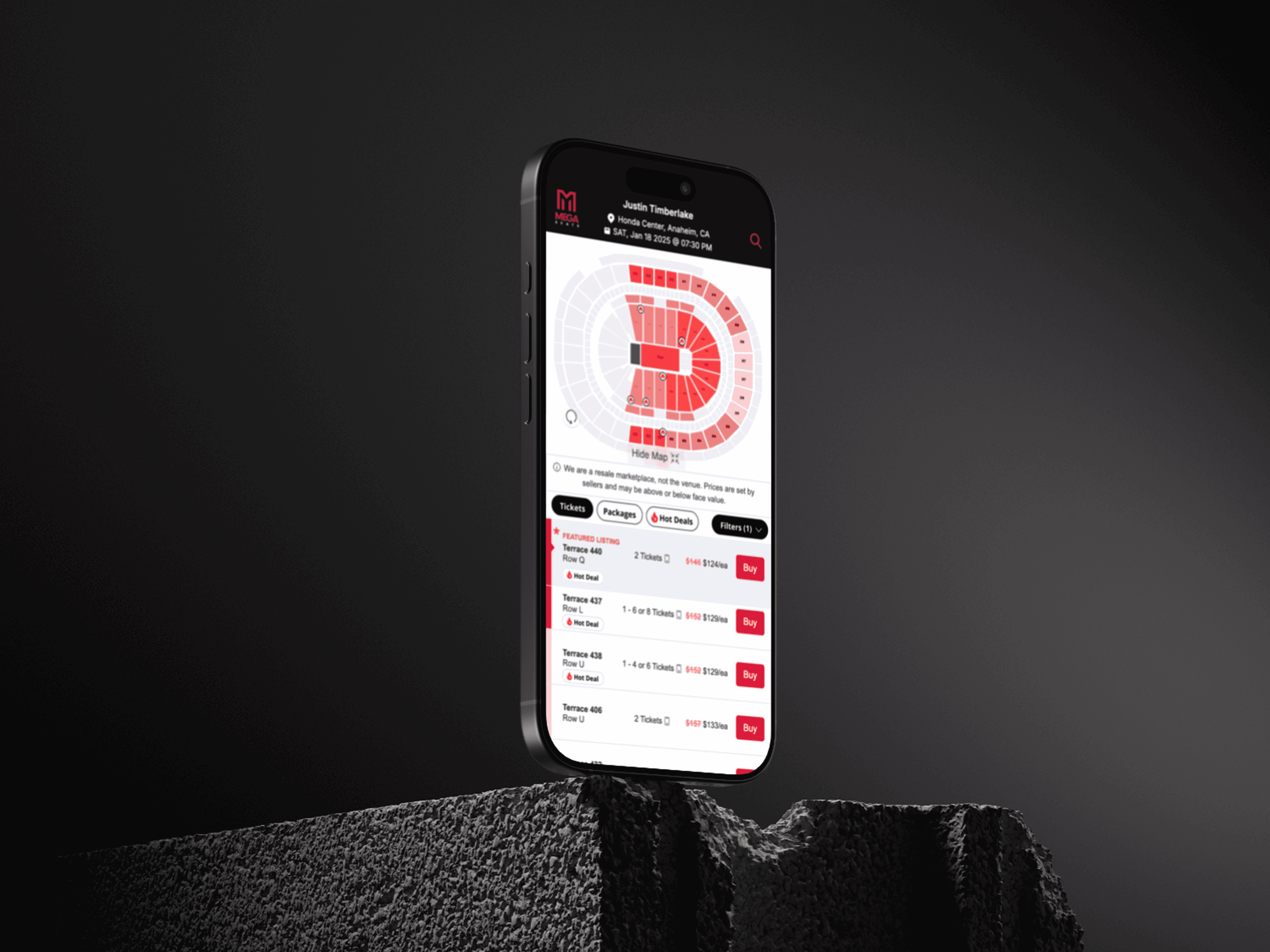

Led an end-to-end A/B testing initiative focused on optimizing deal labeling and merchandising strategy—specifically solving for cognitive overload in the browsing experience. As the sole designer on the project, I owned the full process from hypothesis and UI design to experiment rollout and iteration. I introduced the concept of more intentional, high-signal deal indicators (like “Hot Deals” badges) to help users quickly identify valuable tickets without overwhelming the interface. The goal wasn’t to add more labels, but to design smarter ones that actually guided decision-making.